News

A team of students worked with the Associated Press to increase metadata accuracy, improve media discoverability, reduce customer complaints, and build ground truth.

The Associated Press (AP) publishes 2,000 news stories each day, covering everything from international politics to incendiary pop stars, but those articles are only effective if readers can find them.

To help content appear in relevant web searches, the AP applies metadata tags to upwards of 100,000 pieces of news media each day. But, with about 200,000 different tags to choose from (out of billions of possible people, places, and things), and no clear way to ensure metadata accuracy, the tags are sometimes ineffective, preventing relevant stories from reaching readers.

A team of students in the computational science and engineering master’s program offered by the Institute for Applied Computational Science (IACS) at the Harvard John A. Paulson School of Engineering and Applied Sciences spent the spring semester working with the AP to increase metadata accuracy, improve media discoverability, reduce customer complaints, and build ground truth.

“It is very important that tags are assigned to articles correctly because if they are not, relevant articles will not show up in search results,” said Nripsuta (Ani) Saxena, M.E. ’18. “For a news organization, search relevance is so important because that is how they get readers to their website.”

Metatags are pieces of information added to the code of a web page that describe the page’s content, without actually appearing on the page itself. Tags like “NFL,” “Hillary Clinton,” or “London” might be added to a news story to help tell search engines what the story is about, explained Andrew Lund, S.M. ’18.

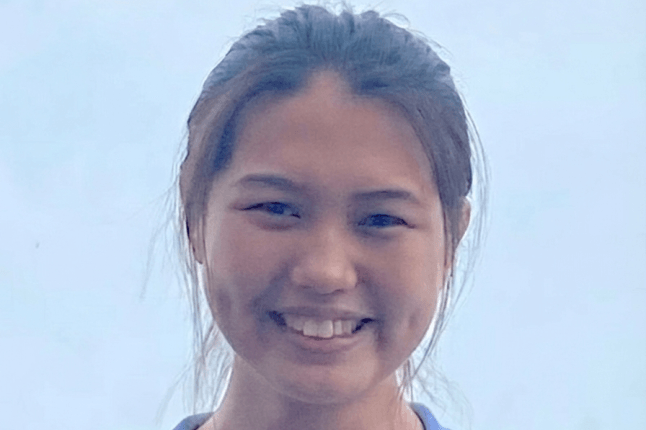

Students (from left) Nripsuta Saxena, Andrew Lund, Divyam Misra, and Anjali Fernandes worked with the Associated Press to improve the accuracy of metadata tags on news stories, which helps relevant stories appear in users' web searches.

Currently, the AP Tagging Service assigns metadata tags to an article by drawing from a list of 200,000 manually updated terms, from corporate entities to names of public figures. The software uses a series of more than 200,000 if/then rules to identify other relevant topics. However, those rules are based on whether a certain term appears in the text, and can be easily taken out of context, Lund said.

The students developed a mostly automated metatagging system that uses named entity extraction to identify and isolate people, places, organizations, companies, and other proper nouns in a piece of text.

“The AP misses a lot of local granularity of specific people who might be in articles, but might not be in their list of tags,” said Lund. “Using named entity recognition, if an article is about a specific person, our system will find it.”

The students’ tool takes the process one step further by smartly sorting tags by their prominence within an article, Lund explained. Each tag that is automatically pulled from the story is first weighed locally within the article—a term near the top of the story is likely more important than one at the bottom—and then globally within a set of 50,000 articles—a tag that appears in thousands of articles is likely more important than a tag that only appears in one or two.

The students also developed a user interface that shows the tags identified by the AP’s current system and tags identified by their tool. The interface enables users to upvote or downvote tags and save that feedback.

The AP had been manually hand-checking a few articles periodically to see how the tagging system was performing, but building a ground truth set will make it much easier for the organization to determine where the system is likely to go wrong and make improvements in the future, Saxena explained.

For a news organization like the AP, the accuracy of metadata tags on articles is vital, since web searches drive a great deal of traffic to the AP website.

Looking forward, the AP may integrate the students’ work into a tool they are currently developing, or work with CSE students next year to produce an unsupervised system that will dynamically change the sorting of tags based on users’ upvotes and downvotes, Lund said.

While it was challenging to narrow the scope of the solution to meet the needs of the AP, Saxena said it was rewarding to be able to use data science skills to help a real-world client solve a problem.

“When you are taking classes, you have problem sets and projects that are very well defined—problems have an answer and projects have a clear goal,” Saxena said. “This was a very nice introduction into the real world, in the sense that problems are not very well structured. Sometimes, you’re not even sure what the problem is.”

The team also included CSE student Divyam Misra and computer science concentrator Anjali Fernandes, A.B. ’18.

Topics: Computer Science

Cutting-edge science delivered direct to your inbox.

Join the Harvard SEAS mailing list.

Press Contact

Adam Zewe | 617-496-5878 | azewe@seas.harvard.edu