News

Key Takeaways

- Harvard SEAS researchers and a multi-university team that includes information theorists and experts in wireless communications, optimization theory, machine learning, and robotics, introduce “cy-trust” as a quantitative measure of how much a robot or vehicle in a networked system should trust information from another agent before acting.

- Their paper argues for cy-trust to be embedded in system designs for ride-share fleets, truck platoons and other automated cyber-physical systems.

From birds flying in formation to students working on a group project, the functioning of a group requires not only coordination and communication but also trust — each member must be confident in the others.

The same is true for networks of connected machines, which are rapidly gaining momentum in our modern world – from self-driving rideshare fleets, to smart power grids.

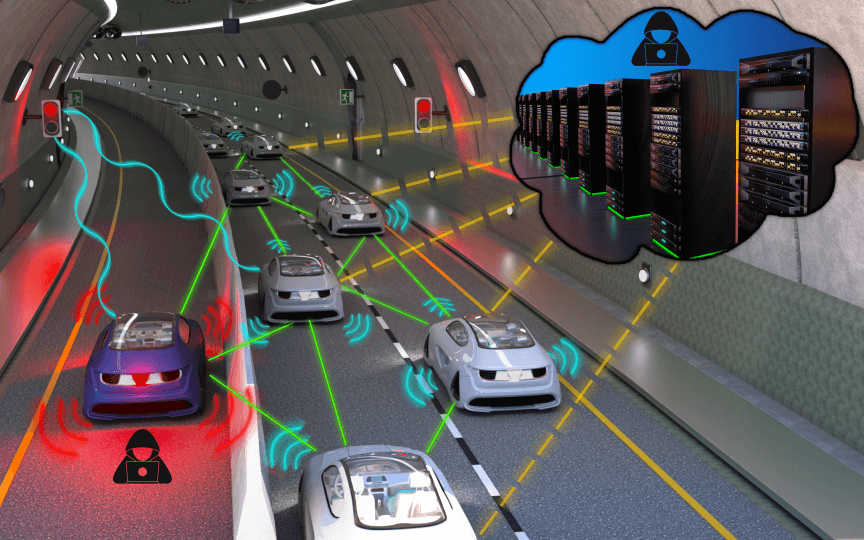

An illustration of how adversarial agents could misreport sensor readings or spoof data to strategically alter the behavior of connected vehicle fleets.

Harvard computer scientists, together with a multi-university team that includes information theorists and experts in wireless communications, optimization theory, machine learning, and robotics, are presenting their vision for incorporating the concept of trust into emerging cyber-physical systems. A new paper led by Stephanie Gil, the John L. Loeb Associate Professor of Engineering and Applied Sciences in the Harvard John A. Paulson School of Engineering and Applied Sciences (SEAS) and associate faculty member in the Kempner Institute, proposes a foundational framework to help multi-agent, connected systems decide what information they can trust before they act.

“Cyber-physical systems are going to become very pervasive,” said Gil, who co-authored the paper in Proceedings of the IEEE. “The question is, how do we secure these systems? How do we make sure they are going to be resilient as they go into the real world? This is something we had to learn from making internet systems secure.”

Cy-trust as a measure of trustworthiness

The paper introduces the concept of “cy-trust:” a quantitative measure of how much one autonomous agent, such as a vehicle or robot, should trust another agent or stream of data when making decisions. The researchers argue that establishing this trust framework is paramount to developing secure and reliable connected systems in the future.

Top from left: co-authors Stephanie Gil, Arsenia Chorti, Michal Yemini. Bottom from left: H. Vincent Poor, Angelia Nedić, and Andrea J. Goldsmith

Traditional network security infrastructure that protects software and data from misuse or theft typically focuses on who is allowed access to a system. But for fleets of robots, vehicles, or smart devices that must constantly coordinate in real time, Gil and colleagues argue that these traditional safeguards are not enough.

The paper surveys threats that are specific to multi-agent, cyber-physical systems, such as malicious or “greedy” behavior by individual agents that disrupt coordination – for example, an autonomous car that speeds up to cut in line and create a dangerous merge. It could also mean false or manipulated data in crowdsourced traffic maps, which could help a hacker reroute traffic for nefarious purposes. Or, in fleets of agents performing search-and-rescue operations, a hacked agent could spoof their location, claiming to be somewhere it’s not and causing gaps in surveillance.

All of these examples could lead to real harm in the physical world by causing accidents and endangering pedestrians or emergency response. Yet, the paper also points out that such “embodied” systems offer a key advantage, by housing individual systems of sensors and computers on board.

Gil and her co-authors propose that onboard sensors, such as cameras, lidar, radar, and GPS, could be used to cross-validate information received from other agents or from the cloud as a built-in measure of trust. Applying signal-processing to wireless communications received could allow each vehicle or robot to validate the origin of the data.

Gil and collaborators envision each agent assigning a numerical trust value between 0 and 1 to data from other agents, based on cues from sensing, context, network behavior, and past experience. Those values would determine how strongly each piece of information should influence the agent’s decisions; for example, if a vehicle in a rideshare fleet had a low trust value, the other vehicles might successfully ignore it to protect the overall system from collapse.

“There’s a clear parallel between this concept of cy-trust, and the familiar kind of psychological trust,” Gil said. “The idea is that psychological trust is a way of accepting risk in an environment where some level of risk is inevitable, you don’t have full access to information, but you still need to make decisions.”

Cy-trust into practice in the lab

Gil is already testing these ideas in her lab.

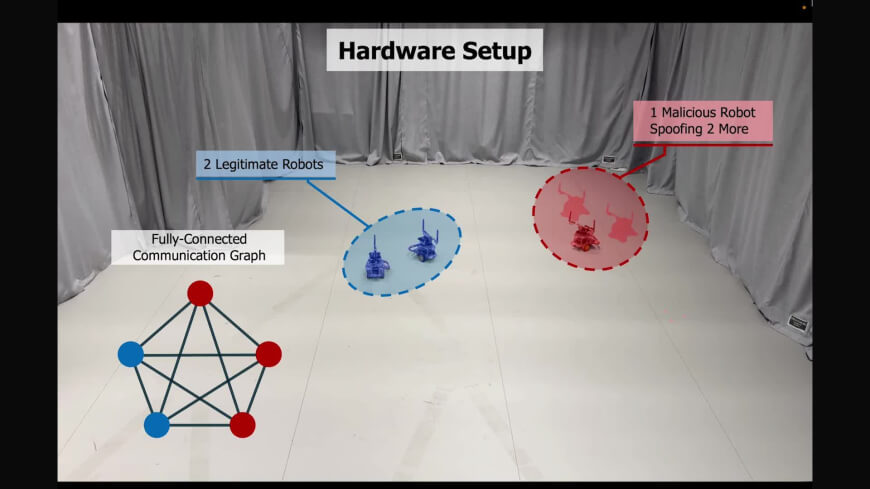

In one set of experiments, a group of blue-team robots represents cooperative agents trying to reach agreement – for example, on a heading direction so they can move as a platoon – while a group of red-team robots mimics attackers launching a disruption to the network by creating fake identities in a “Sybil Attack.”

Typically, networked robots would simply accept every message and run a standard distributed consensus or optimization algorithm, making them vulnerable to attack. The red-team robots could nudge the group into unsafe or inefficient behavior by pretending to be many different agents or by falsifying their positions.

In Gil’s experiments, each blue-team robot listens to incoming wireless messages; performs signal processing on the physical wireless signal; and ascertains whether messages that appear to come from many distinct agents are in fact emerging from the same physical source. This generates a trust score for each purported agent.

Over time, the system learns which agents are likely malicious and chooses to ignore their inputs, allowing the blue-team robots to continue their collective task.

Experimental setup where cooperative blue-team robots are attacked by adversarial red-team agents. Credit: Thomas Kaminsky, Hammad Izhar, Áron Vékássy / Harvard REACT Lab

The researchers also argue that cy-trust must be built into policy and regulation before it can gain public acceptance, especially as autonomous coordinated systems are already on the rise. Ride-share vehicle fleets are being deployed in cities like Phoenix and San Francisco. Truck platooning and automated convoys are under active development in an attempt to streamline supply chains. And automated warehouses, like those that power Amazon fulfillment centers, already rely on fleets of robots, although in a controlled environment. Moving such systems into the open world is an exciting and logical next step, Gil says.

The interdisciplinary survey paper on enabling trust in cyber-physical systems "could not come at a more important time," said Andrea Goldsmith, paper co-author and president of Stony Brook University. "As we move into a world where so many of our physical systems consist of multiple agents controlled by AI in the cloud, we require a rigorous framework for their design that is secure and robust against malicious agents. Our paper provides a comprehensive roadmap of state-of-the-art techniques and new research frontiers to design secure robust collaborative multiagent systems."

The paper's co-authors are Michal Yemini of Bar-Ilan University in Israel; Arsenia Chorti of ENSEA in France; Angelia Nedic of Arizona State University; Vincent Poor of Princeton University; and Andrea J. Goldsmith of Stony Brook University.

Topics: AI / Machine Learning, Computer Science, Electrical Engineering, Industry, Research, Robotics, Technology

Cutting-edge science delivered direct to your inbox.

Join the Harvard SEAS mailing list.

Scientist Profiles

Stephanie Gil

John L. Loeb Associate Professor of Engineering and Applied Sciences

Press Contact

Anne J. Manning | amanning@seas.harvard.edu