News

Musical Chairs project and team leads Tamar Sella, Jason Wang, Suvin Sundararajan, Peggy Yin and Yiting Huang (Matt Goisman/SEAS)

When we sit on chairs, do we leave anything behind? When we interact with objects, do they remember us? And if those objects could transmit their memories back to us, what might it sound like?

These are some of the ideas Peggy Yin and her student organization Conflux explored in “Musical Chairs,” a trio of wooden chairs capable of recording and retransmitting audio signals that were installed in Harvard Yard during ARTS FIRST Week. The project combined elements of machine learning and computer science, musical performance, and carpentry to create a fusion of art and technology.

“My ultimate goal is to create a space where people can have natural conversations, but also reflect afterwards on the way their words and expressions can impact other people, and give people the time and space to express themselves authentically and honestly,” said Yin, Conflux’s co-founder and a second-year student studying computational neuroscience and history of art and architecture. “I’ve always been interested in how technology, specifically media technology, can really help us build empathy and understanding between people, and how it can augment our ability to communicate and interpret other people’s communication signals.”

The Musical Chairs soundscape plays as to Harvard Yard visitors sit down. (Conflux)

Each chair plays harp music when specialized sensors detect that someone is sitting in it. Microphones pick up conversations, and computers then use machine learning algorithms to extract audio data such as frequency and tempo of people’s voices. Pre-recorded samples of Harvard students' voices are then used to compose music based on that data, which is then sent back to transducers in the chair’s armrests. By putting an elbow on the armrest and an ear to one’s hand, the musical soundscape of past conversations can be heard through bone conduction.

“Some people who came by earlier sat down and had a conversation, and I really loved that they were allowing the soundscape to just occur,” Yin said. “At the end, one of them said it was really relaxing and they wished they had one in their home. For them to have had a completely normal conversation while listening to the music, and then say that after, really showed me they were allowing the music to support their conversation in a positive way without disrupting it.”

Musical Chairs project lead Peggy Yin, center, demonstrates the bone conduction technique in front of team leads Tamar Sella and Jason Wang in Harvard Yard. (Matt Goisman/SEAS)

As the chair was being built, students from the Harvard John A. Paulson School of Engineering and Applied Sciences (SEAS) led the way in getting the hardware and software to work. Suvin Sundararajan, a bioengineering concentrator, 3D-printed the sensors and acted as hardware lead, while computer science and math student Jason Wang designed the machine learning algorithms and distributed computing network powerful enough to extract audio data in real time.

“I’m a machine learning guy, so I really like playing around with this type of stuff,” Wang said. “The most challenging part was figuring out how to process audio features in real time, because normally they take a lot of computing. We had to put in three MacBook Pros to get that running in real time and figure out how to communicate these features to the software that’s making the music.”

Yin’s project was selected for a Public Arts grant from the Harvard Office of the Arts. SEAS also helped bring the project to fruition through funding earmarked for SEAS-affiliated student organizations via the Teaching and Learning group. The sensors and electronics were also designed at SEAS. The woodworking and carpentry, meanwhile, was completed at the Art, Film, and Visual Studies Wood Shop.

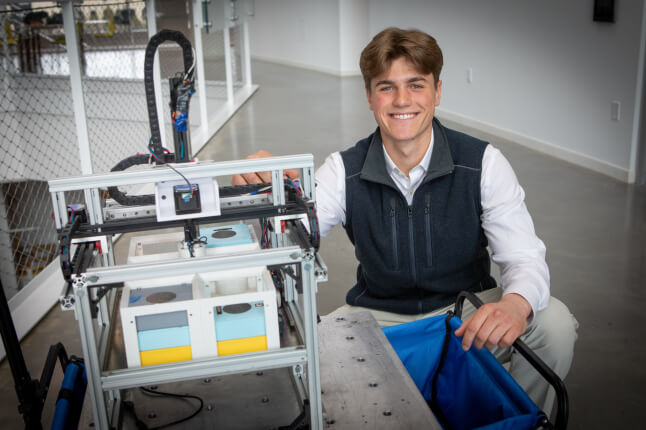

A look at the central microphone box, hardware and wiring enabling the soundscape of Musical Chairs. (Matt Goisman/SEAS)

“I was familiar with bone conduction through wood, and I was really interested in the idea of a design that’s compatible with and supports both technology and a conceptual idea that comes together through audio and visual experiences,” said Tamar Sella, a second-year studying comparative literature and art, film and visual studies, who led the chair design and build team. “Each portion of the chair had its own set of problems to tackle, which took a lot of communication between the design team, as well as technological aspects that had to be compatible.”

Co-founded by Yin and second-year student Alice Cai this year, Conflux has already sponsored multiple projects at the Science and Engineer Complex. The group organized an interactive multimedia art installation called “Liminal Interfaces” during the winter session, and last fall teamed with hAR/VRd to create the “Nervous Network,” which combined heart monitors, LED light strips and virtual reality headsets to depict how the human body reacts to fear.

“‘Musical Chairs’ came down to this idea of how we create an environment that invites people to lean in, but also take time to reflect and lean out,” Yin said. “We wanted to create an environment for discourse.”

Topics: REEF Makerspace, AI / Machine Learning, Computer Science, Electrical Engineering, Materials Science & Mechanical Engineering, Student Organizations

Cutting-edge science delivered direct to your inbox.

Join the Harvard SEAS mailing list.

Press Contact

Matt Goisman | mgoisman@g.harvard.edu